Catching up here.

Derek,

Do we have traceroutes from all users? Does anything in VCenter show any

system resource constraints?

On Thursday, April 4, 2019, Person, Arthur A. <aap1@xxxxxxx> wrote:

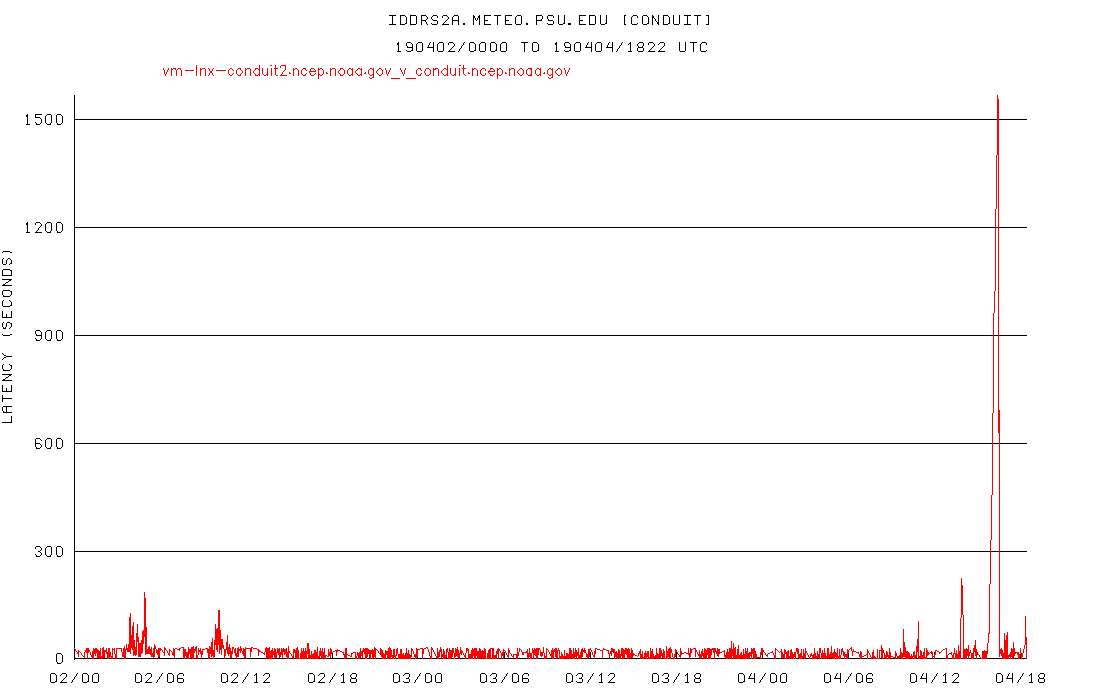

> Yeh, definitely looks "blipier" starting around 7Z this morning, but

> nothing like it was before. And all last night was clean. Here's our

> graph with a 2-way split, a huge improvement over what it was before the

> switch to Boulder:

>

>

>

> Agree with Pete that this morning's data probably isn't a good test since

> there were other factors. Since this seems so much better, I'm going to

> try switching to no split as an experiment and see how it holds up.

>

>

> Art

>

>

> Arthur A. Person

> Assistant Research Professor, System Administrator

> Penn State Department of Meteorology and Atmospheric Science

> email: aap1@xxxxxxx, phone: 814-863-1563 <callto:814-863-1563>

>

>

> ------------------------------

> *From:* Pete Pokrandt <poker@xxxxxxxxxxxx>

> *Sent:* Thursday, April 4, 2019 1:51 PM

> *To:* Derek VanPelt - NOAA Affiliate

> *Cc:* Person, Arthur A.; Gilbert Sebenste; Anne Myckow - NOAA Affiliate;

> conduit@xxxxxxxxxxxxxxxx; _NCEP.List.pmb-dataflow;

> support-conduit@xxxxxxxxxxxxxxxx

> *Subject:* Re: [Ncep.list.pmb-dataflow] [conduit] Large lags on CONDUIT

> feed - started a week or so ago

>

> Ah, so perhaps not a good test.. I'll set it back to a 5-way split and see

> how it looks tomorrow.

>

> Thanks for the info,

> Pete

>

>

>

> <https://nam01.safelinks.protection.outlook.com/?url=http%3A%2F%2Fwww.weather.com%2Ftv%2Fshows%2Fwx-geeks%2Fvideo%2Fthe-incredible-shrinking-cold-pool&data=02%7C01%7Caap1%40psu.edu%7C6e890386144241bf399108d6b9264197%7C7cf48d453ddb4389a9c1c115526eb52e%7C0%7C0%7C636899971294622244&sdata=RlGzz1FGgYdtzWryrRUGLdCglNFSEm2XjB%2B5CfqhAYQ%3D&reserved=0>

> --

> Pete Pokrandt - Systems Programmer

> UW-Madison Dept of Atmospheric and Oceanic Sciences

> 608-262-3086 - poker@xxxxxxxxxxxx

>

> ------------------------------

> *From:* Derek VanPelt - NOAA Affiliate <derek.vanpelt@xxxxxxxx>

> *Sent:* Thursday, April 4, 2019 12:38 PM

> *To:* Pete Pokrandt

> *Cc:* Person, Arthur A.; Gilbert Sebenste; Anne Myckow - NOAA Affiliate;

> conduit@xxxxxxxxxxxxxxxx; _NCEP.List.pmb-dataflow;

> support-conduit@xxxxxxxxxxxxxxxx

> *Subject:* Re: [Ncep.list.pmb-dataflow] [conduit] Large lags on CONDUIT

> feed - started a week or so ago

>

> HI Pete -- we did have a separate issu hit the CONDUIT feed today. We

> should be recovering now, but the backlog was sizeable. If these numbers

> are not back to the baseline in the next hour or so please let us know. We

> are also watching our queues and they are decreasing, but not as quickly as

> we had hoped.

>

> Thank you,

>

> Derek

>

> On Thu, Apr 4, 2019 at 1:26 PM 'Pete Pokrandt' via _NCEP list.pmb-dataflow

> <ncep.list.pmb-dataflow@xxxxxxxx> wrote:

>

> FYI - there is still a much larger lag for the 12 UTC run with a 5-way

> split compared to a 10-way split. It's better since everything else failed

> over to Boulder, but I'd venture to guess that's not the root of the

> problem.

>

>

>

> Prior to whatever is going on to cause this, I don'r recall ever seeing

> lags this large with a 5-way split. It looked much more like the left hand

> side of this graph, with small increases in lag with each 6 hourly model

> run cycle, but more like 100 seconds vs the ~900 that I got this morning.

>

> FYI I am going to change back to a 10 way split for now.

>

> Pete

>

>

>

>

> <https://nam01.safelinks.protection.outlook.com/?url=http%3A%2F%2Fwww.weather.com%2Ftv%2Fshows%2Fwx-geeks%2Fvideo%2Fthe-incredible-shrinking-cold-pool&data=02%7C01%7Caap1%40psu.edu%7C6e890386144241bf399108d6b9264197%7C7cf48d453ddb4389a9c1c115526eb52e%7C0%7C0%7C636899971294622244&sdata=RlGzz1FGgYdtzWryrRUGLdCglNFSEm2XjB%2B5CfqhAYQ%3D&reserved=0>

> --

> Pete Pokrandt - Systems Programmer

> UW-Madison Dept of Atmospheric and Oceanic Sciences

> 608-262-3086 - poker@xxxxxxxxxxxx

>

> ------------------------------

> *From:* conduit-bounces@xxxxxxxxxxxxxxxx <conduit-bounces@xxxxxxxxxxxxxxxx>

> on behalf of Pete Pokrandt <poker@xxxxxxxxxxxx>

> *Sent:* Wednesday, April 3, 2019 4:57 PM

> *To:* Person, Arthur A.; Gilbert Sebenste; Anne Myckow - NOAA Affiliate

> *Cc:* conduit@xxxxxxxxxxxxxxxx; _NCEP.List.pmb-dataflow;

> support-conduit@xxxxxxxxxxxxxxxx

> *Subject:* Re: [conduit] [Ncep.list.pmb-dataflow] Large lags on CONDUIT

> feed - started a week or so ago

>

> Sorry, was out this morning and just had a chance to look into this. I

> concur with Art and Gilbert that things appear to have gotten better

> starting with the failover of everything else to Boulder yesterday. I will

> also reconfigure to go back to a 5-way split (as opposed to the 10-way

> split that I've been using since this issue began) and keep an eye on

> tomorrow's 12 UTC model run cycle - if the lags go up, it usually happens

> worst during that cycle, shortly before 18 UTC each day.

>

> I'll report back tomorrow how it looks, or you can see at

>

> http://rtstats.unidata.ucar.edu/cgi-bin/rtstats/iddstats_

> nc?CONDUIT+idd.aos.wisc.edu

> <https://nam01.safelinks.protection.outlook.com/?url=http%3A%2F%2Frtstats.unidata.ucar.edu%2Fcgi-bin%2Frtstats%2Fiddstats_nc%3FCONDUIT%2Bidd.aos.wisc.edu&data=02%7C01%7Caap1%40psu.edu%7C6e890386144241bf399108d6b9264197%7C7cf48d453ddb4389a9c1c115526eb52e%7C0%7C0%7C636899971294632249&sdata=qHifoV8dU5qyHrQCvkYtoSHx%2FbtmqmZnWoHEIYBVoME%3D&reserved=0>

>

> Thanks,

> Pete

>

>

>

> <https://nam01.safelinks.protection.outlook.com/?url=http%3A%2F%2Fwww.weather.com%2Ftv%2Fshows%2Fwx-geeks%2Fvideo%2Fthe-incredible-shrinking-cold-pool&data=02%7C01%7Caap1%40psu.edu%7C6e890386144241bf399108d6b9264197%7C7cf48d453ddb4389a9c1c115526eb52e%7C0%7C0%7C636899971294632249&sdata=L3qBBWLbZz7S16vV1MzAcyfXVxBDym5pIi%2FNtrTYERI%3D&reserved=0>

> --

> Pete Pokrandt - Systems Programmer

> UW-Madison Dept of Atmospheric and Oceanic Sciences

> 608-262-3086 - poker@xxxxxxxxxxxx

>

> ------------------------------

> *From:* conduit-bounces@xxxxxxxxxxxxxxxx <conduit-bounces@xxxxxxxxxxxxxxxx>

> on behalf of Person, Arthur A. <aap1@xxxxxxx>

> *Sent:* Wednesday, April 3, 2019 4:04 PM

> *To:* Gilbert Sebenste; Anne Myckow - NOAA Affiliate

> *Cc:* conduit@xxxxxxxxxxxxxxxx; _NCEP.List.pmb-dataflow;

> support-conduit@xxxxxxxxxxxxxxxx

> *Subject:* Re: [conduit] [Ncep.list.pmb-dataflow] Large lags on CONDUIT

> feed - started a week or so ago

>

>

> Anne,

>

>

> I'll hop back in the loop here... for some reason these replies started

> going into my junk file (bleh). Anyway, I agree with Gilbert's

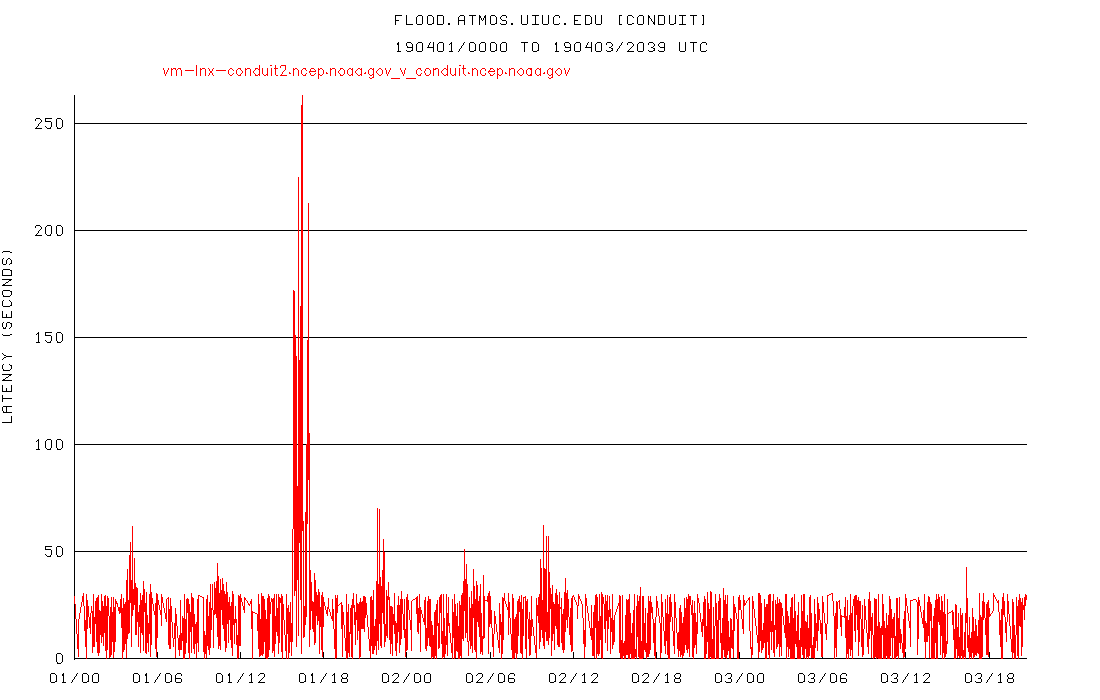

> assessment. Things turned real clean around 12Z yesterday, looking at the

> graphs. I usually look at flood.atmos.uiuc.edu

> <https://nam01.safelinks.protection.outlook.com/?url=http%3A%2F%2Fflood.atmos.uiuc.edu&data=02%7C01%7Caap1%40psu.edu%7C6e890386144241bf399108d6b9264197%7C7cf48d453ddb4389a9c1c115526eb52e%7C0%7C0%7C636899971294642258&sdata=98b%2Fh3jqPhpWTvvxdSskAKI6cYC7yvJFfVjVA%2BJJT0o%3D&reserved=0>

> when there are problem as their connection always seems to be the

> cleanest. If there are even small blips or ups and downs in their

> latencies, that usually means there's a network aberration somewhere that

> usually amplifies into hundreds or thousands of seconds at our site and

> elsewhere. Looking at their graph now, you can see the blipiness up until

> 12Z yesterday, and then it's flat (except for the one spike around 16Z

> today which I would ignore):

>

>

>

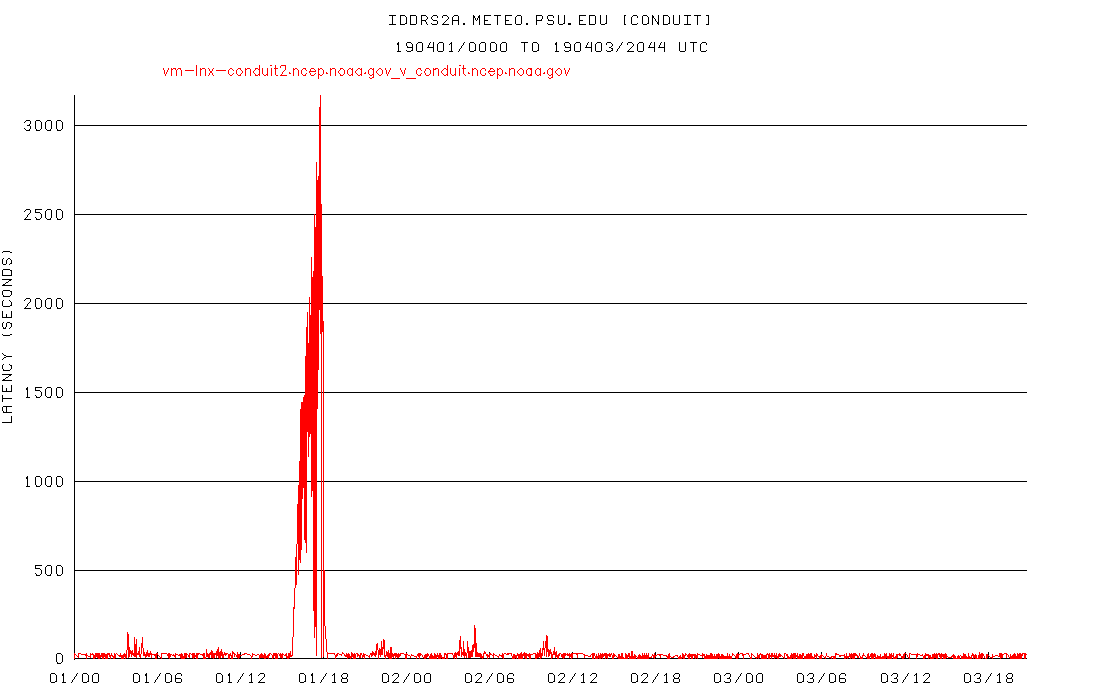

> Our direct-connected site, which is using a 10-way split right now, also

> shows a return to calmness in the latencies:

>

>

> Prior to the recent latency jump, I did not use split requests and the

> reception had been stellar for quite some time. It's my suspicion that

> this is a networking congestion issue somewhere close to the source since

> it seems to affect all downstream sites. For that reason, I don't think

> solving this problem should necessarily involve upgrading your server

> software, but rather identifying what's jamming up the network near D.C.,

> and testing this by switching to Boulder was an excellent idea. I will now

> try switching our system to a two-way split to see if this performance

> holds up with fewer pipes. Thanks for your help and I'll let you know

> what I find out.

>

>

> Art

>

>

> Arthur A. Person

> Assistant Research Professor, System Administrator

> Penn State Department of Meteorology and Atmospheric Science

> email: aap1@xxxxxxx, phone: 814-863-1563 <callto:814-863-1563>

>

>

> ------------------------------

> *From:* conduit-bounces@xxxxxxxxxxxxxxxx <conduit-bounces@xxxxxxxxxxxxxxxx>

> on behalf of Gilbert Sebenste <gilbert@xxxxxxxxxxxxxxxx>

> *Sent:* Wednesday, April 3, 2019 4:07 PM

> *To:* Anne Myckow - NOAA Affiliate

> *Cc:* conduit@xxxxxxxxxxxxxxxx; _NCEP.List.pmb-dataflow;

> support-conduit@xxxxxxxxxxxxxxxx

> *Subject:* Re: [conduit] [Ncep.list.pmb-dataflow] Large lags on CONDUIT

> feed - started a week or so ago

>

> Hello Anne,

>

> I'll jump in here as well. Consider the CONDUIT delays at UNIDATA:

>

> http://rtstats.unidata.ucar.edu/cgi-bin/rtstats/iddstats_

> nc?CONDUIT+conduit.unidata.ucar.edu

> <https://nam01.safelinks.protection.outlook.com/?url=http%3A%2F%2Frtstats.unidata.ucar.edu%2Fcgi-bin%2Frtstats%2Fiddstats_nc%3FCONDUIT%2Bconduit.unidata.ucar.edu&data=02%7C01%7Caap1%40psu.edu%7C6e890386144241bf399108d6b9264197%7C7cf48d453ddb4389a9c1c115526eb52e%7C0%7C0%7C636899971294642258&sdata=HZ2iWWbYXqKTzvm6oO8YdZguW52AoMObn9fbmuTtuYk%3D&reserved=0>

>

>

> And now, Wisconsin:

>

> http://rtstats.unidata.ucar.edu/cgi-bin/rtstats/iddstats_

> nc?CONDUIT+idd.aos.wisc.edu

> <https://nam01.safelinks.protection.outlook.com/?url=http%3A%2F%2Frtstats.unidata.ucar.edu%2Fcgi-bin%2Frtstats%2Fiddstats_nc%3FCONDUIT%2Bidd.aos.wisc.edu&data=02%7C01%7Caap1%40psu.edu%7C6e890386144241bf399108d6b9264197%7C7cf48d453ddb4389a9c1c115526eb52e%7C0%7C0%7C636899971294652267&sdata=aULrXJAu05047kUOk%2FprOlZTAOf0WjZKhuYffOlURCo%3D&reserved=0>

>

>

> And finally, the University of Washington:

>

> http://rtstats.unidata.ucar.edu/cgi-bin/rtstats/iddstats_

> nc?CONDUIT+freshair1.atmos.washington.edu

> <https://nam01.safelinks.protection.outlook.com/?url=http%3A%2F%2Frtstats.unidata.ucar.edu%2Fcgi-bin%2Frtstats%2Fiddstats_nc%3FCONDUIT%2Bfreshair1.atmos.washington.edu&data=02%7C01%7Caap1%40psu.edu%7C6e890386144241bf399108d6b9264197%7C7cf48d453ddb4389a9c1c115526eb52e%7C0%7C0%7C636899971294652267&sdata=aZs6%2FxwbAMKMD95QXxzVA80dIZCACf3uoGXgMGaWSPA%3D&reserved=0>

>

>

> All three of whom have direct feeds from you. Flipping over to Boulder

> definitely caused a major improvement. There was still a brief spike in

> delay, but much shorter and minimal

> compared to what it was.

>

> Gilbert

>

> On Wed, Apr 3, 2019 at 10:03 AM Anne Myckow - NOAA Affiliate <

> anne.myckow@xxxxxxxx> wrote:

>

> Hi Pete,

>

> As of yesterday we failed almost all of our applications to our site in

> Boulder (meaning away from CONDUIT). Have you noticed an improvement in

> your speeds since yesterday afternoon? If so this will give us a clue that

> maybe there's something interfering on our side that isn't specifically

> CONDUIT, but another app that might be causing congestion. (And if it's the

> same then that's a clue in the other direction.)

>

> Thanks,

> Anne

>

> On Mon, Apr 1, 2019 at 3:24 PM Pete Pokrandt <poker@xxxxxxxxxxxx> wrote:

>

> The lag here at UW-Madison was up to 1200 seconds today, and that's with a

> 10-way split feed. Whatever is causing the issue has definitely not been

> resolved, and historically is worse during the work week than on the

> weekends. If that helps at all.

>

> Pete

>

>

>

> <https://nam01.safelinks.protection.outlook.com/?url=http%3A%2F%2Fwww.weather.com%2Ftv%2Fshows%2Fwx-geeks%2Fvideo%2Fthe-incredible-shrinking-cold-pool&data=02%7C01%7Caap1%40psu.edu%7C6e890386144241bf399108d6b9264197%7C7cf48d453ddb4389a9c1c115526eb52e%7C0%7C0%7C636899971294662272&sdata=LG5UXEp172qN9OEuZaU9j%2F%2F2rfsI36RU7IX5f1hkC9I%3D&reserved=0>

> --

> Pete Pokrandt - Systems Programmer

> UW-Madison Dept of Atmospheric and Oceanic Sciences

> 608-262-3086 - poker@xxxxxxxxxxxx

>

> ------------------------------

> *From:* Anne Myckow - NOAA Affiliate <anne.myckow@xxxxxxxx>

> *Sent:* Thursday, March 28, 2019 4:28 PM

> *To:* Person, Arthur A.

> *Cc:* Carissa Klemmer - NOAA Federal; Pete Pokrandt;

> _NCEP.List.pmb-dataflow; conduit@xxxxxxxxxxxxxxxx;

> support-conduit@xxxxxxxxxxxxxxxx

> *Subject:* Re: [Ncep.list.pmb-dataflow] Large lags on CONDUIT feed -

> started a week or so ago

>

> Hello Art,

>

> We will not be upgrading to version 6.13 on these systems as they are not

> robust enough to support the local logging inherent in the new version.

>

> I will check in with my team on if there are any further actions we can

> take to try and troubleshoot this issue, but I fear we may be at the limit

> of our ability to make this better.

>

> I’ll let you know tomorrow where we stand. Thanks.

> Anne

>

> On Mon, Mar 25, 2019 at 3:00 PM Person, Arthur A. <aap1@xxxxxxx> wrote:

>

> Carissa,

>

>

> Can you report any status on this inquiry?

>

>

> Thanks... Art

>

>

> Arthur A. Person

> Assistant Research Professor, System Administrator

> Penn State Department of Meteorology and Atmospheric Science

> email: aap1@xxxxxxx, phone: 814-863-1563 <callto:814-863-1563>

>

>

> ------------------------------

> *From:* Carissa Klemmer - NOAA Federal <carissa.l.klemmer@xxxxxxxx>

> *Sent:* Tuesday, March 12, 2019 8:30 AM

> *To:* Pete Pokrandt

> *Cc:* Person, Arthur A.; conduit@xxxxxxxxxxxxxxxx;

> support-conduit@xxxxxxxxxxxxxxxx; _NCEP.List.pmb-dataflow

> *Subject:* Re: Large lags on CONDUIT feed - started a week or so ago

>

> Hi Everyone

>

> I’ve added the Dataflow team email to the thread. I haven’t heard that any

> changes were made or that any issues were found. But the team can look

> today and see if we have any signifiers of overall slowness with anything .

>

> Dataflow, try taking a look at the new Citrix or VM troubleshooting tools

> if there are any abnormal signatures that may explain this.

>

> On Monday, March 11, 2019, Pete Pokrandt <poker@xxxxxxxxxxxx> wrote:

>

> Art,

>

> I don't know if NCEP ever figured anything out, but I've been able to keep

> my latencies reasonable (300-600s max, mostly during the 12 UTC model

> suite) by splitting my CONDUIT request 10 ways, instead of the 5 that I had

> been doing, or in a single request. Maybe give that a try and see if it

> helps at all.

>

> Pete

>

>

>

> <https://nam01.safelinks.protection.outlook.com/?url=http%3A%2F%2Fwww.weather.com%2Ftv%2Fshows%2Fwx-geeks%2Fvideo%2Fthe-incredible-shrinking-cold-pool&data=02%7C01%7Caap1%40psu.edu%7C6e890386144241bf399108d6b9264197%7C7cf48d453ddb4389a9c1c115526eb52e%7C0%7C0%7C636899971294672277&sdata=wOVES5nTl8G2XUosMJA%2FTIyxxPQNSTekl9aR%2BAO1Ais%3D&reserved=0>

> --

> Pete Pokrandt - Systems Programmer

> UW-Madison Dept of Atmospheric and Oceanic Sciences

> 608-262-3086 - poker@xxxxxxxxxxxx

>

> ------------------------------

> *From:* Person, Arthur A. <aap1@xxxxxxx>

> *Sent:* Monday, March 11, 2019 3:45 PM

> *To:* Holly Uhlenhake - NOAA Federal; Pete Pokrandt

> *Cc:* conduit@xxxxxxxxxxxxxxxx; support-conduit@xxxxxxxxxxxxxxxx

> *Subject:* Re: [conduit] Large lags on CONDUIT feed - started a week or

> so ago

>

>

> Holly,

>

>

> Was there any resolution to this on the NCEP end? I'm still seeing

> terrible delays (1000-4000 seconds) receiving data from

> conduit.ncep.noaa.gov

> <https://nam01.safelinks.protection.outlook.com/?url=http%3A%2F%2Fconduit.ncep.noaa.gov&data=02%7C01%7Caap1%40psu.edu%7C6e890386144241bf399108d6b9264197%7C7cf48d453ddb4389a9c1c115526eb52e%7C0%7C0%7C636899971294672277&sdata=ppCQb64oFDqGc5%2FUxLygteEF8ytssF6n6XFEhj6jLz8%3D&reserved=0>.

> It would be helpful to know if things are resolved at NCEP's end so I know

> whether to look further down the line.

>

>

> Thanks... Art

>

>

> Arthur A. Person

> Assistant Research Professor, System Administrator

> Penn State Department of Meteorology and Atmospheric Science

> email: aap1@xxxxxxx, phone: 814-863-1563 <callto:814-863-1563>

>

>

> ------------------------------

> *From:* conduit-bounces@xxxxxxxxxxxxxxxx <conduit-bounces@xxxxxxxxxxxxxxxx>

> on behalf of Holly Uhlenhake - NOAA Federal <holly.uhlenhake@xxxxxxxx>

> *Sent:* Thursday, February 21, 2019 12:05 PM

> *To:* Pete Pokrandt

> *Cc:* conduit@xxxxxxxxxxxxxxxx; support-conduit@xxxxxxxxxxxxxxxx

> *Subject:* Re: [conduit] Large lags on CONDUIT feed - started a week or

> so ago

>

> Hi Pete,

>

> We'll take a look and see if we can figure out what might be going on. We

> haven't done anything to try and address this yet, but based on your

> analysis I'm suspicious that it might be tied to a resource constraint on

> the VM or the blade it resides on.

>

> Thanks,

> Holly Uhlenhake

> Acting Dataflow Team Lead

>

> On Thu, Feb 21, 2019 at 11:32 AM Pete Pokrandt <poker@xxxxxxxxxxxx> wrote:

>

> Just FYI, data is flowing, but the large lags continue.

>

> http://rtstats.unidata.ucar.edu/cgi-bin/rtstats/iddstats_

> nc?CONDUIT+idd.aos.wisc.edu

> <https://nam01.safelinks.protection.outlook.com/?url=http%3A%2F%2Frtstats.unidata.ucar.edu%2Fcgi-bin%2Frtstats%2Fiddstats_nc%3FCONDUIT%2Bidd.aos.wisc.edu&data=02%7C01%7Caap1%40psu.edu%7C6e890386144241bf399108d6b9264197%7C7cf48d453ddb4389a9c1c115526eb52e%7C0%7C0%7C636899971294682286&sdata=F2yrSSS4tt8pGjNPR3ff4ZNh9gT9lz1KwMVLSoByBJM%3D&reserved=0>

> http://rtstats.unidata.ucar.edu/cgi-bin/rtstats/iddstats_

> nc?CONDUIT+conduit.unidata.ucar.edu

> <https://nam01.safelinks.protection.outlook.com/?url=http%3A%2F%2Frtstats.unidata.ucar.edu%2Fcgi-bin%2Frtstats%2Fiddstats_nc%3FCONDUIT%2Bconduit.unidata.ucar.edu&data=02%7C01%7Caap1%40psu.edu%7C6e890386144241bf399108d6b9264197%7C7cf48d453ddb4389a9c1c115526eb52e%7C0%7C0%7C636899971294682286&sdata=Yq4d5aBGWuCbbN2M1lO7uv9G4F42H3iSml%2F7V4nZI9U%3D&reserved=0>

>

> Pete

>

>

>

> <https://nam01.safelinks.protection.outlook.com/?url=http%3A%2F%2Fwww.weather.com%2Ftv%2Fshows%2Fwx-geeks%2Fvideo%2Fthe-incredible-shrinking-cold-pool&data=02%7C01%7Caap1%40psu.edu%7C6e890386144241bf399108d6b9264197%7C7cf48d453ddb4389a9c1c115526eb52e%7C0%7C0%7C636899971294692291&sdata=XimJOoq1dBkLx4jnSaQg7UyC3vg0bfVXNwUAPLcQsss%3D&reserved=0>

> --

> Pete Pokrandt - Systems Programmer

> UW-Madison Dept of Atmospheric and Oceanic Sciences

> 608-262-3086 - poker@xxxxxxxxxxxx

>

> ------------------------------

> *From:* conduit-bounces@xxxxxxxxxxxxxxxx <conduit-bounces@xxxxxxxxxxxxxxxx>

> on behalf of Pete Pokrandt <poker@xxxxxxxxxxxx>

> *Sent:* Wednesday, February 20, 2019 12:07 PM

> *To:* Carissa Klemmer - NOAA Federal

> *Cc:* conduit@xxxxxxxxxxxxxxxx; support-conduit@xxxxxxxxxxxxxxxx

> *Subject:* Re: [conduit] Large lags on CONDUIT feed - started a week or

> so ago

>

> Data is flowing again - picked up somewhere in the GEFS. Maybe CONDUIT

> server was restarted, or ldm on it? Lags are large (3000s+) but dropping

> slowly

>

> Pete

>

>

>

> <https://nam01.safelinks.protection.outlook.com/?url=http%3A%2F%2Fwww.weather.com%2Ftv%2Fshows%2Fwx-geeks%2Fvideo%2Fthe-incredible-shrinking-cold-pool&data=02%7C01%7Caap1%40psu.edu%7C6e890386144241bf399108d6b9264197%7C7cf48d453ddb4389a9c1c115526eb52e%7C0%7C0%7C636899971294702300&sdata=JL1Lxs7AnAT55Gol1Alqp9tGaIiBhlpvlmO9cMWWxB4%3D&reserved=0>

> --

> Pete Pokrandt - Systems Programmer

> UW-Madison Dept of Atmospheric and Oceanic Sciences

> 608-262-3086 - poker@xxxxxxxxxxxx

>

> ------------------------------

> *From:* conduit-bounces@xxxxxxxxxxxxxxxx <conduit-bounces@xxxxxxxxxxxxxxxx>

> on behalf of Pete Pokrandt <poker@xxxxxxxxxxxx>

> *Sent:* Wednesday, February 20, 2019 11:56 AM

> *To:* Carissa Klemmer - NOAA Federal

> *Cc:* conduit@xxxxxxxxxxxxxxxx; support-conduit@xxxxxxxxxxxxxxxx

> *Subject:* Re: [conduit] Large lags on CONDUIT feed - started a week or

> so ago

>

> Just a quick follow-up - we started falling far enough behind (3600+ sec)

> that we are losing data. We got short files starting at 174h into the GFS

> run, and only got (incomplete) data through 207h.

>

> We have now not received any data on CONDUIT since 11:27 AM CST (1727 UTC)

> today (Wed Feb 20)

>

> Pete

>

>

>

> <https://nam01.safelinks.protection.outlook.com/?url=http%3A%2F%2Fwww.weather.com%2Ftv%2Fshows%2Fwx-geeks%2Fvideo%2Fthe-incredible-shrinking-cold-pool&data=02%7C01%7Caap1%40psu.edu%7C6e890386144241bf399108d6b9264197%7C7cf48d453ddb4389a9c1c115526eb52e%7C0%7C0%7C636899971294702300&sdata=JL1Lxs7AnAT55Gol1Alqp9tGaIiBhlpvlmO9cMWWxB4%3D&reserved=0>

> --

> Pete Pokrandt - Systems Programmer

> UW-Madison Dept of Atmospheric and Oceanic Sciences

> 608-262-3086 - poker@xxxxxxxxxxxx

>

> ------------------------------

> *From:* conduit-bounces@xxxxxxxxxxxxxxxx <conduit-bounces@xxxxxxxxxxxxxxxx>

> on behalf of Pete Pokrandt <poker@xxxxxxxxxxxx>

> *Sent:* Wednesday, February 20, 2019 11:28 AM

> *To:* Carissa Klemmer - NOAA Federal

> *Cc:* conduit@xxxxxxxxxxxxxxxx; support-conduit@xxxxxxxxxxxxxxxx

> *Subject:* [conduit] Large lags on CONDUIT feed - started a week or so ago

>

> Carissa,

>

> We have been feeding CONDUIT using a 5 way split feed direct from

> conduit.ncep.noaa.gov

> <https://nam01.safelinks.protection.outlook.com/?url=http%3A%2F%2Fconduit.ncep.noaa.gov&data=02%7C01%7Caap1%40psu.edu%7C6e890386144241bf399108d6b9264197%7C7cf48d453ddb4389a9c1c115526eb52e%7C0%7C0%7C636899971294712305&sdata=Re5rLU8VLnRNY0xooP%2FKlWZIyYLBBI%2Byvo8corSyC1o%3D&reserved=0>,

> and it had been really good for some time, lags 30-60 seconds or less.

>

> However, the past week or so, we've been seeing some very large lags

> during each 6 hour model suite - Unidata is also seeing these - they are

> also feeding direct from conduit.ncep.noaa.gov

> <https://nam01.safelinks.protection.outlook.com/?url=http%3A%2F%2Fconduit.ncep.noaa.gov&data=02%7C01%7Caap1%40psu.edu%7C6e890386144241bf399108d6b9264197%7C7cf48d453ddb4389a9c1c115526eb52e%7C0%7C0%7C636899971294712305&sdata=Re5rLU8VLnRNY0xooP%2FKlWZIyYLBBI%2Byvo8corSyC1o%3D&reserved=0>

> .

>

> http://rtstats.unidata.ucar.edu/cgi-bin/rtstats/iddstats_

> nc?CONDUIT+idd.aos.wisc.edu

> <https://nam01.safelinks.protection.outlook.com/?url=http%3A%2F%2Frtstats.unidata.ucar.edu%2Fcgi-bin%2Frtstats%2Fiddstats_nc%3FCONDUIT%2Bidd.aos.wisc.edu&data=02%7C01%7Caap1%40psu.edu%7C6e890386144241bf399108d6b9264197%7C7cf48d453ddb4389a9c1c115526eb52e%7C0%7C0%7C636899971294722314&sdata=dVvjBw6a1nEsOSDabGxYrXHpkYHemnyrL6RLpY4VLu8%3D&reserved=0>

>

> http://rtstats.unidata.ucar.edu/cgi-bin/rtstats/iddstats_

> nc?CONDUIT+conduit.unidata.ucar.edu

> <https://nam01.safelinks.protection.outlook.com/?url=http%3A%2F%2Frtstats.unidata.ucar.edu%2Fcgi-bin%2Frtstats%2Fiddstats_nc%3FCONDUIT%2Bconduit.unidata.ucar.edu&data=02%7C01%7Caap1%40psu.edu%7C6e890386144241bf399108d6b9264197%7C7cf48d453ddb4389a9c1c115526eb52e%7C0%7C0%7C636899971294722314&sdata=rp9x1NtsaQt%2FVUNCtppPmFaCS8jEH%2BqGYuNrHVlqhYQ%3D&reserved=0>

>

>

> Any idea what's going on, or how we can find out?

>

> Thanks!

> Pete

>

>

>

> <https://nam01.safelinks.protection.outlook.com/?url=http%3A%2F%2Fwww.weather.com%2Ftv%2Fshows%2Fwx-geeks%2Fvideo%2Fthe-incredible-shrinking-cold-pool&data=02%7C01%7Caap1%40psu.edu%7C6e890386144241bf399108d6b9264197%7C7cf48d453ddb4389a9c1c115526eb52e%7C0%7C0%7C636899971294732318&sdata=NETJ8hdrZhNvlhoDcV91UuqdlahD5vz9qGA7rqxEzBY%3D&reserved=0>

> --

> Pete Pokrandt - Systems Programmer

> UW-Madison Dept of Atmospheric and Oceanic Sciences

> 608-262-3086 - poker@xxxxxxxxxxxx

> _______________________________________________

> NOTE: All exchanges posted to Unidata maintained email lists are

> recorded in the Unidata inquiry tracking system and made publicly

> available through the web. Users who post to any of the lists we

> maintain are reminded to remove any personal information that they

> do not want to be made public.

>

>

> conduit mailing list

> conduit@xxxxxxxxxxxxxxxx

> For list information or to unsubscribe, visit:

> http://www.unidata.ucar.edu/mailing_lists/

> <https://nam01.safelinks.protection.outlook.com/?url=http%3A%2F%2Fwww.unidata.ucar.edu%2Fmailing_lists%2F&data=02%7C01%7Caap1%40psu.edu%7C6e890386144241bf399108d6b9264197%7C7cf48d453ddb4389a9c1c115526eb52e%7C0%7C0%7C636899971294742328&sdata=rJdq%2BALjQgLvmcDK8V0%2Fv9gPvzzSczWwXbnTGLCZFdo%3D&reserved=0>

>

>

>

> --

> Carissa Klemmer

> NCEP Central Operations

> IDSB Branch Chief

> 301-683-3835

>

> _______________________________________________

> Ncep.list.pmb-dataflow mailing list

> Ncep.list.pmb-dataflow@xxxxxxxxxxxxxxxxxxxx

> https://www.lstsrv.ncep.noaa.gov/mailman/listinfo/ncep.list.pmb-dataflow

> <https://nam01.safelinks.protection.outlook.com/?url=https%3A%2F%2Fwww.lstsrv.ncep.noaa.gov%2Fmailman%2Flistinfo%2Fncep.list.pmb-dataflow&data=02%7C01%7Caap1%40psu.edu%7C6e890386144241bf399108d6b9264197%7C7cf48d453ddb4389a9c1c115526eb52e%7C0%7C0%7C636899971294742328&sdata=xOHu1e%2BBfa7uc1iurj0%2BYbKQ1Lvv5NIwdwlt1sC2ZUA%3D&reserved=0>

>

> --

> Anne Myckow

> Lead Dataflow Analyst

> NOAA/NCEP/NCO

> 301-683-3825

>

>

>

> --

> Anne Myckow

> Lead Dataflow Analyst

> NOAA/NCEP/NCO

> 301-683-3825

> _______________________________________________

> NOTE: All exchanges posted to Unidata maintained email lists are

> recorded in the Unidata inquiry tracking system and made publicly

> available through the web. Users who post to any of the lists we

> maintain are reminded to remove any personal information that they

> do not want to be made public.

>

>

> conduit mailing list

> conduit@xxxxxxxxxxxxxxxx

> For list information or to unsubscribe, visit:

> http://www.unidata.ucar.edu/mailing_lists/

> <https://nam01.safelinks.protection.outlook.com/?url=http%3A%2F%2Fwww.unidata.ucar.edu%2Fmailing_lists%2F&data=02%7C01%7Caap1%40psu.edu%7C6e890386144241bf399108d6b9264197%7C7cf48d453ddb4389a9c1c115526eb52e%7C0%7C0%7C636899971294752332&sdata=QN4asaC2Yif0FcIQQPEKf6WAh5rK47FNbg1I9yfsJXs%3D&reserved=0>

>

>

>

> --

> ----

>

> Gilbert Sebenste

> Consulting Meteorologist

> AllisonHouse, LLC

> _______________________________________________

> Ncep.list.pmb-dataflow mailing list

> Ncep.list.pmb-dataflow@xxxxxxxxxxxxxxxxxxxx

> https://www.lstsrv.ncep.noaa.gov/mailman/listinfo/ncep.list.pmb-dataflow

> <https://nam01.safelinks.protection.outlook.com/?url=https%3A%2F%2Fwww.lstsrv.ncep.noaa.gov%2Fmailman%2Flistinfo%2Fncep.list.pmb-dataflow&data=02%7C01%7Caap1%40psu.edu%7C6e890386144241bf399108d6b9264197%7C7cf48d453ddb4389a9c1c115526eb52e%7C0%7C0%7C636899971294752332&sdata=fenZeS2rQqUCwh%2BApmJXTxg41UgKGTVevd8cTFtMXHQ%3D&reserved=0>

>

>

>

> --

> Derek Van Pelt

> DataFlow Analyst

> NOAA/NCEP/NCO

>

--

Carissa Klemmer

NCEP Central Operations

IDSB Branch Chief

301-683-3835