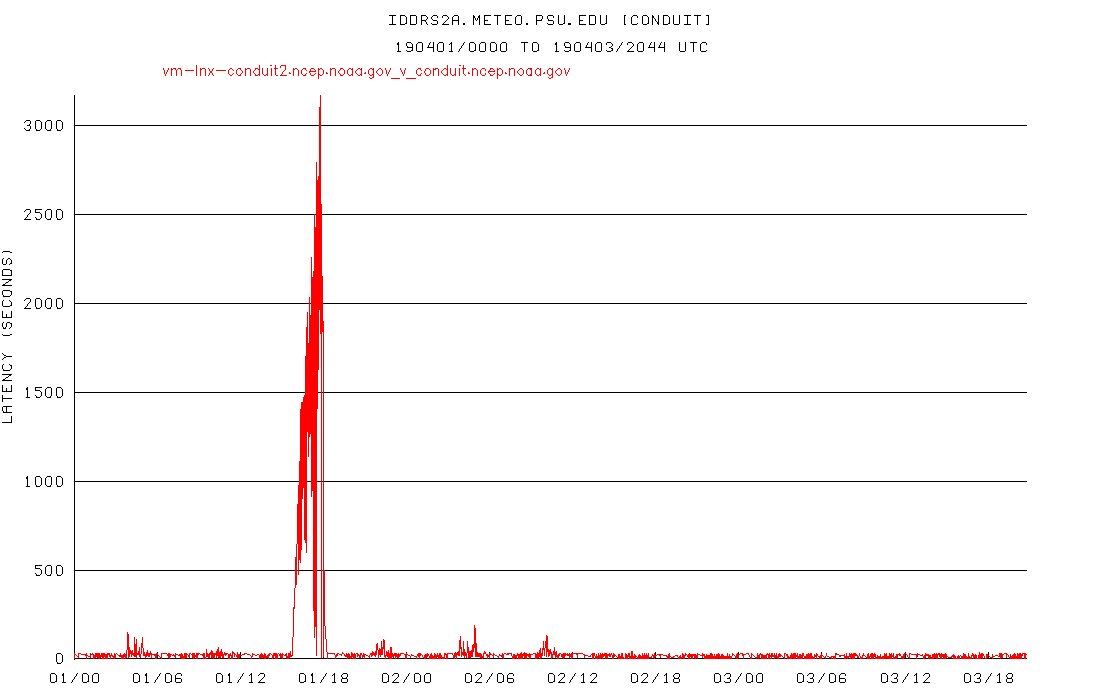

FYI - there is still a much larger lag for the 12 UTC run with a 5-way split

compared to a 10-way split. It's better since everything else failed over to

Boulder, but I'd venture to guess that's not the root of the problem.

[cid:ba34b446-db57-4e7d-809b-c9e973c4d31f]

Prior to whatever is going on to cause this, I don'r recall ever seeing lags

this large with a 5-way split. It looked much more like the left hand side of

this graph, with small increases in lag with each 6 hourly model run cycle, but

more like 100 seconds vs the ~900 that I got this morning.

FYI I am going to change back to a 10 way split for now.

Pete

<http://www.weather.com/tv/shows/wx-geeks/video/the-incredible-shrinking-cold-pool>--

Pete Pokrandt - Systems Programmer

UW-Madison Dept of Atmospheric and Oceanic Sciences

608-262-3086 - poker@xxxxxxxxxxxx

________________________________

From: conduit-bounces@xxxxxxxxxxxxxxxx <conduit-bounces@xxxxxxxxxxxxxxxx> on

behalf of Pete Pokrandt <poker@xxxxxxxxxxxx>

Sent: Wednesday, April 3, 2019 4:57 PM

To: Person, Arthur A.; Gilbert Sebenste; Anne Myckow - NOAA Affiliate

Cc: conduit@xxxxxxxxxxxxxxxx; _NCEP.List.pmb-dataflow;

support-conduit@xxxxxxxxxxxxxxxx

Subject: Re: [conduit] [Ncep.list.pmb-dataflow] Large lags on CONDUIT feed -

started a week or so ago

Sorry, was out this morning and just had a chance to look into this. I concur

with Art and Gilbert that things appear to have gotten better starting with the

failover of everything else to Boulder yesterday. I will also reconfigure to go

back to a 5-way split (as opposed to the 10-way split that I've been using

since this issue began) and keep an eye on tomorrow's 12 UTC model run cycle -

if the lags go up, it usually happens worst during that cycle, shortly before

18 UTC each day.

I'll report back tomorrow how it looks, or you can see at

http://rtstats.unidata.ucar.edu/cgi-bin/rtstats/iddstats_nc?CONDUIT+idd.aos.wisc.edu

Thanks,

Pete

<http://www.weather.com/tv/shows/wx-geeks/video/the-incredible-shrinking-cold-pool>--

Pete Pokrandt - Systems Programmer

UW-Madison Dept of Atmospheric and Oceanic Sciences

608-262-3086 - poker@xxxxxxxxxxxx

________________________________

From: conduit-bounces@xxxxxxxxxxxxxxxx <conduit-bounces@xxxxxxxxxxxxxxxx> on

behalf of Person, Arthur A. <aap1@xxxxxxx>

Sent: Wednesday, April 3, 2019 4:04 PM

To: Gilbert Sebenste; Anne Myckow - NOAA Affiliate

Cc: conduit@xxxxxxxxxxxxxxxx; _NCEP.List.pmb-dataflow;

support-conduit@xxxxxxxxxxxxxxxx

Subject: Re: [conduit] [Ncep.list.pmb-dataflow] Large lags on CONDUIT feed -

started a week or so ago

Anne,

I'll hop back in the loop here... for some reason these replies started going

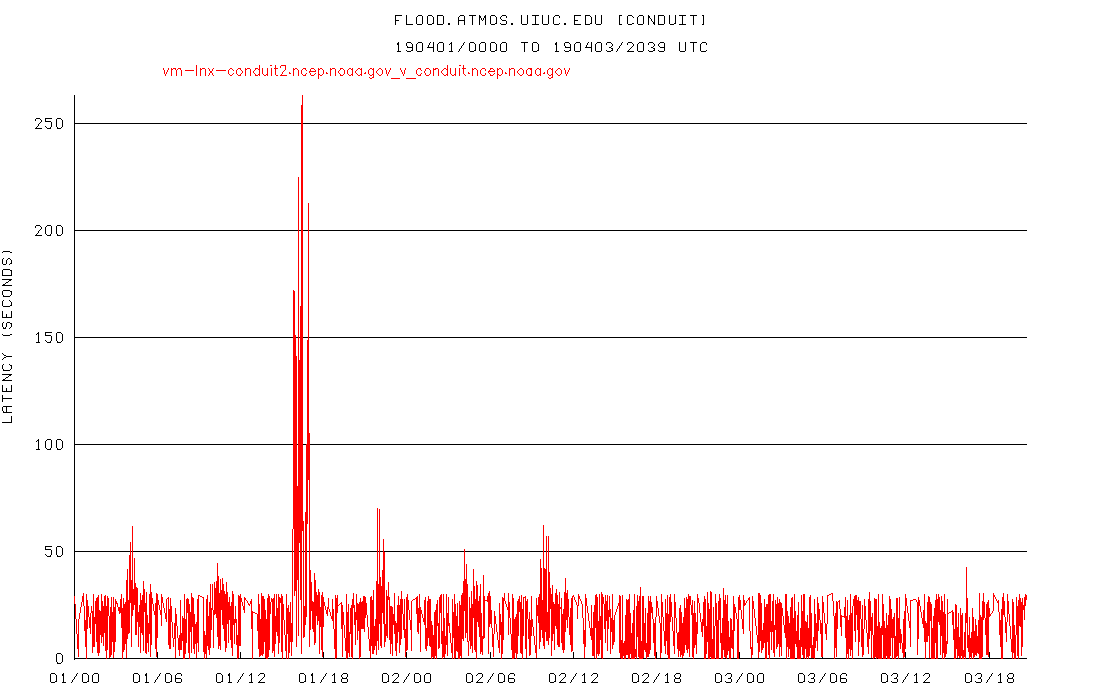

into my junk file (bleh). Anyway, I agree with Gilbert's assessment. Things

turned real clean around 12Z yesterday, looking at the graphs. I usually look

at flood.atmos.uiuc.edu when there are problem as their connection always seems

to be the cleanest. If there are even small blips or ups and downs in their

latencies, that usually means there's a network aberration somewhere that

usually amplifies into hundreds or thousands of seconds at our site and

elsewhere. Looking at their graph now, you can see the blipiness up until 12Z

yesterday, and then it's flat (except for the one spike around 16Z today which

I would ignore):

[cid:a4366062-d09a-4f0e-83c1-e64a4381abe0]

Our direct-connected site, which is using a 10-way split right now, also shows

a return to calmness in the latencies:

[cid:bbefd7e3-1d2d-4a82-8cb6-b05f53591b59]

Prior to the recent latency jump, I did not use split requests and the

reception had been stellar for quite some time. It's my suspicion that this is

a networking congestion issue somewhere close to the source since it seems to

affect all downstream sites. For that reason, I don't think solving this

problem should necessarily involve upgrading your server software, but rather

identifying what's jamming up the network near D.C., and testing this by

switching to Boulder was an excellent idea. I will now try switching our

system to a two-way split to see if this performance holds up with fewer pipes.

Thanks for your help and I'll let you know what I find out.

Art

Arthur A. Person

Assistant Research Professor, System Administrator

Penn State Department of Meteorology and Atmospheric Science

email: aap1@xxxxxxx, phone: 814-863-1563<callto:814-863-1563>

________________________________

From: conduit-bounces@xxxxxxxxxxxxxxxx <conduit-bounces@xxxxxxxxxxxxxxxx> on

behalf of Gilbert Sebenste <gilbert@xxxxxxxxxxxxxxxx>

Sent: Wednesday, April 3, 2019 4:07 PM

To: Anne Myckow - NOAA Affiliate

Cc: conduit@xxxxxxxxxxxxxxxx; _NCEP.List.pmb-dataflow;

support-conduit@xxxxxxxxxxxxxxxx

Subject: Re: [conduit] [Ncep.list.pmb-dataflow] Large lags on CONDUIT feed -

started a week or so ago

Hello Anne,

I'll jump in here as well. Consider the CONDUIT delays at UNIDATA:

http://rtstats.unidata.ucar.edu/cgi-bin/rtstats/iddstats_nc?CONDUIT+conduit.unidata.ucar.edu<https://nam01.safelinks.protection.outlook.com/?url=http%3A%2F%2Frtstats.unidata.ucar.edu%2Fcgi-bin%2Frtstats%2Fiddstats_nc%3FCONDUIT%2Bconduit.unidata.ucar.edu&data=02%7C01%7Caap1%40psu.edu%7C37d6bff5c67c435eded908d6b870083d%7C7cf48d453ddb4389a9c1c115526eb52e%7C0%7C0%7C636899188649346587&sdata=7VxKJA9cdIIDVHeWmqEMiWs%2B2XDkkkstp%2BIDGiThTr0%3D&reserved=0>

And now, Wisconsin:

http://rtstats.unidata.ucar.edu/cgi-bin/rtstats/iddstats_nc?CONDUIT+idd.aos.wisc.edu<https://nam01.safelinks.protection.outlook.com/?url=http%3A%2F%2Frtstats.unidata.ucar.edu%2Fcgi-bin%2Frtstats%2Fiddstats_nc%3FCONDUIT%2Bidd.aos.wisc.edu&data=02%7C01%7Caap1%40psu.edu%7C37d6bff5c67c435eded908d6b870083d%7C7cf48d453ddb4389a9c1c115526eb52e%7C0%7C0%7C636899188649346587&sdata=4bUgxHGPWV%2FcXmICTG5Hme0TO0FjLuDWsm%2Bur9PVjjk%3D&reserved=0>

And finally, the University of Washington:

http://rtstats.unidata.ucar.edu/cgi-bin/rtstats/iddstats_nc?CONDUIT+freshair1.atmos.washington.edu<https://nam01.safelinks.protection.outlook.com/?url=http%3A%2F%2Frtstats.unidata.ucar.edu%2Fcgi-bin%2Frtstats%2Fiddstats_nc%3FCONDUIT%2Bfreshair1.atmos.washington.edu&data=02%7C01%7Caap1%40psu.edu%7C37d6bff5c67c435eded908d6b870083d%7C7cf48d453ddb4389a9c1c115526eb52e%7C0%7C0%7C636899188649356601&sdata=gfkvi93tgxaouzn%2FSw1568fp5e4ACnxgDpLY2Mpf6mg%3D&reserved=0>

All three of whom have direct feeds from you. Flipping over to Boulder

definitely caused a major improvement. There was still a brief spike in delay,

but much shorter and minimal

compared to what it was.

Gilbert

On Wed, Apr 3, 2019 at 10:03 AM Anne Myckow - NOAA Affiliate

<anne.myckow@xxxxxxxx<mailto:anne.myckow@xxxxxxxx>> wrote:

Hi Pete,

As of yesterday we failed almost all of our applications to our site in Boulder

(meaning away from CONDUIT). Have you noticed an improvement in your speeds

since yesterday afternoon? If so this will give us a clue that maybe there's

something interfering on our side that isn't specifically CONDUIT, but another

app that might be causing congestion. (And if it's the same then that's a clue

in the other direction.)

Thanks,

Anne

On Mon, Apr 1, 2019 at 3:24 PM Pete Pokrandt

<poker@xxxxxxxxxxxx<mailto:poker@xxxxxxxxxxxx>> wrote:

The lag here at UW-Madison was up to 1200 seconds today, and that's with a

10-way split feed. Whatever is causing the issue has definitely not been

resolved, and historically is worse during the work week than on the weekends.

If that helps at all.

Pete

<https://nam01.safelinks.protection.outlook.com/?url=http%3A%2F%2Fwww.weather.com%2Ftv%2Fshows%2Fwx-geeks%2Fvideo%2Fthe-incredible-shrinking-cold-pool&data=02%7C01%7Caap1%40psu.edu%7C37d6bff5c67c435eded908d6b870083d%7C7cf48d453ddb4389a9c1c115526eb52e%7C0%7C0%7C636899188649356601&sdata=FUliDAA4lsuckme%2Bc6hfagpZeHv5M2vqEgQs2PQglS8%3D&reserved=0>--

Pete Pokrandt - Systems Programmer

UW-Madison Dept of Atmospheric and Oceanic Sciences

608-262-3086 - poker@xxxxxxxxxxxx<mailto:poker@xxxxxxxxxxxx>

________________________________

From: Anne Myckow - NOAA Affiliate

<anne.myckow@xxxxxxxx<mailto:anne.myckow@xxxxxxxx>>

Sent: Thursday, March 28, 2019 4:28 PM

To: Person, Arthur A.

Cc: Carissa Klemmer - NOAA Federal; Pete Pokrandt; _NCEP.List.pmb-dataflow;

conduit@xxxxxxxxxxxxxxxx<mailto:conduit@xxxxxxxxxxxxxxxx>;

support-conduit@xxxxxxxxxxxxxxxx<mailto:support-conduit@xxxxxxxxxxxxxxxx>

Subject: Re: [Ncep.list.pmb-dataflow] Large lags on CONDUIT feed - started a

week or so ago

Hello Art,

We will not be upgrading to version 6.13 on these systems as they are not

robust enough to support the local logging inherent in the new version.

I will check in with my team on if there are any further actions we can take to

try and troubleshoot this issue, but I fear we may be at the limit of our

ability to make this better.

I’ll let you know tomorrow where we stand. Thanks.

Anne

On Mon, Mar 25, 2019 at 3:00 PM Person, Arthur A.

<aap1@xxxxxxx<mailto:aap1@xxxxxxx>> wrote:

Carissa,

Can you report any status on this inquiry?

Thanks... Art

Arthur A. Person

Assistant Research Professor, System Administrator

Penn State Department of Meteorology and Atmospheric Science

email: aap1@xxxxxxx<mailto:aap1@xxxxxxx>, phone:

814-863-1563<callto:814-863-1563>

________________________________

From: Carissa Klemmer - NOAA Federal

<carissa.l.klemmer@xxxxxxxx<mailto:carissa.l.klemmer@xxxxxxxx>>

Sent: Tuesday, March 12, 2019 8:30 AM

To: Pete Pokrandt

Cc: Person, Arthur A.;

conduit@xxxxxxxxxxxxxxxx<mailto:conduit@xxxxxxxxxxxxxxxx>;

support-conduit@xxxxxxxxxxxxxxxx<mailto:support-conduit@xxxxxxxxxxxxxxxx>;

_NCEP.List.pmb-dataflow

Subject: Re: Large lags on CONDUIT feed - started a week or so ago

Hi Everyone

I’ve added the Dataflow team email to the thread. I haven’t heard that any

changes were made or that any issues were found. But the team can look today

and see if we have any signifiers of overall slowness with anything.

Dataflow, try taking a look at the new Citrix or VM troubleshooting tools if

there are any abnormal signatures that may explain this.

On Monday, March 11, 2019, Pete Pokrandt

<poker@xxxxxxxxxxxx<mailto:poker@xxxxxxxxxxxx>> wrote:

Art,

I don't know if NCEP ever figured anything out, but I've been able to keep my

latencies reasonable (300-600s max, mostly during the 12 UTC model suite) by

splitting my CONDUIT request 10 ways, instead of the 5 that I had been doing,

or in a single request. Maybe give that a try and see if it helps at all.

Pete

<https://nam01.safelinks.protection.outlook.com/?url=http%3A%2F%2Fwww.weather.com%2Ftv%2Fshows%2Fwx-geeks%2Fvideo%2Fthe-incredible-shrinking-cold-pool&data=02%7C01%7Caap1%40psu.edu%7C37d6bff5c67c435eded908d6b870083d%7C7cf48d453ddb4389a9c1c115526eb52e%7C0%7C0%7C636899188649366606&sdata=0Uq86HUy%2F9i6uAqxoIhfEB1Eg7ewpYOywyvG47ikQAg%3D&reserved=0>--

Pete Pokrandt - Systems Programmer

UW-Madison Dept of Atmospheric and Oceanic Sciences

608-262-3086 - poker@xxxxxxxxxxxx<mailto:poker@xxxxxxxxxxxx>

________________________________

From: Person, Arthur A. <aap1@xxxxxxx<mailto:aap1@xxxxxxx>>

Sent: Monday, March 11, 2019 3:45 PM

To: Holly Uhlenhake - NOAA Federal; Pete Pokrandt

Cc: conduit@xxxxxxxxxxxxxxxx<mailto:conduit@xxxxxxxxxxxxxxxx>;

support-conduit@xxxxxxxxxxxxxxxx<mailto:support-conduit@xxxxxxxxxxxxxxxx>

Subject: Re: [conduit] Large lags on CONDUIT feed - started a week or so ago

Holly,

Was there any resolution to this on the NCEP end? I'm still seeing terrible

delays (1000-4000 seconds) receiving data from

conduit.ncep.noaa.gov<https://nam01.safelinks.protection.outlook.com/?url=http%3A%2F%2Fconduit.ncep.noaa.gov&data=02%7C01%7Caap1%40psu.edu%7C37d6bff5c67c435eded908d6b870083d%7C7cf48d453ddb4389a9c1c115526eb52e%7C0%7C0%7C636899188649366606&sdata=evR9s8wApu2w4dzy8Yh11mRtxanMWZC26TtyfLHBfwA%3D&reserved=0>.

It would be helpful to know if things are resolved at NCEP's end so I know

whether to look further down the line.

Thanks... Art

Arthur A. Person

Assistant Research Professor, System Administrator

Penn State Department of Meteorology and Atmospheric Science

email: aap1@xxxxxxx<mailto:aap1@xxxxxxx>, phone:

814-863-1563<callto:814-863-1563>

________________________________

From: conduit-bounces@xxxxxxxxxxxxxxxx<mailto:conduit-bounces@xxxxxxxxxxxxxxxx>

<conduit-bounces@xxxxxxxxxxxxxxxx<mailto:conduit-bounces@xxxxxxxxxxxxxxxx>> on

behalf of Holly Uhlenhake - NOAA Federal

<holly.uhlenhake@xxxxxxxx<mailto:holly.uhlenhake@xxxxxxxx>>

Sent: Thursday, February 21, 2019 12:05 PM

To: Pete Pokrandt

Cc: conduit@xxxxxxxxxxxxxxxx<mailto:conduit@xxxxxxxxxxxxxxxx>;

support-conduit@xxxxxxxxxxxxxxxx<mailto:support-conduit@xxxxxxxxxxxxxxxx>

Subject: Re: [conduit] Large lags on CONDUIT feed - started a week or so ago

Hi Pete,

We'll take a look and see if we can figure out what might be going on. We

haven't done anything to try and address this yet, but based on your analysis

I'm suspicious that it might be tied to a resource constraint on the VM or the

blade it resides on.

Thanks,

Holly Uhlenhake

Acting Dataflow Team Lead

On Thu, Feb 21, 2019 at 11:32 AM Pete Pokrandt

<poker@xxxxxxxxxxxx<mailto:poker@xxxxxxxxxxxx>> wrote:

Just FYI, data is flowing, but the large lags continue.

http://rtstats.unidata.ucar.edu/cgi-bin/rtstats/iddstats_nc?CONDUIT+idd.aos.wisc.edu<https://nam01.safelinks.protection.outlook.com/?url=http%3A%2F%2Frtstats.unidata.ucar.edu%2Fcgi-bin%2Frtstats%2Fiddstats_nc%3FCONDUIT%2Bidd.aos.wisc.edu&data=02%7C01%7Caap1%40psu.edu%7C37d6bff5c67c435eded908d6b870083d%7C7cf48d453ddb4389a9c1c115526eb52e%7C0%7C0%7C636899188649376611&sdata=GcjaSAo9NiRA5NPLwufLexMPn5QWCg9RdiKyefZ0xkA%3D&reserved=0>

http://rtstats.unidata.ucar.edu/cgi-bin/rtstats/iddstats_nc?CONDUIT+conduit.unidata.ucar.edu<https://nam01.safelinks.protection.outlook.com/?url=http%3A%2F%2Frtstats.unidata.ucar.edu%2Fcgi-bin%2Frtstats%2Fiddstats_nc%3FCONDUIT%2Bconduit.unidata.ucar.edu&data=02%7C01%7Caap1%40psu.edu%7C37d6bff5c67c435eded908d6b870083d%7C7cf48d453ddb4389a9c1c115526eb52e%7C0%7C0%7C636899188649376611&sdata=6QPoJ%2BQrCwPtDaXIS6bM55IapbnamrbKskWIupUFyF8%3D&reserved=0>

Pete

<https://nam01.safelinks.protection.outlook.com/?url=http%3A%2F%2Fwww.weather.com%2Ftv%2Fshows%2Fwx-geeks%2Fvideo%2Fthe-incredible-shrinking-cold-pool&data=02%7C01%7Caap1%40psu.edu%7C37d6bff5c67c435eded908d6b870083d%7C7cf48d453ddb4389a9c1c115526eb52e%7C0%7C0%7C636899188649386620&sdata=lAnYeg%2BDQB0H9SFXddNJFy5DzuUk44%2BsLVWlKFG90%2Bs%3D&reserved=0>--

Pete Pokrandt - Systems Programmer

UW-Madison Dept of Atmospheric and Oceanic Sciences

608-262-3086 - poker@xxxxxxxxxxxx<mailto:poker@xxxxxxxxxxxx>

________________________________

From: conduit-bounces@xxxxxxxxxxxxxxxx<mailto:conduit-bounces@xxxxxxxxxxxxxxxx>

<conduit-bounces@xxxxxxxxxxxxxxxx<mailto:conduit-bounces@xxxxxxxxxxxxxxxx>> on

behalf of Pete Pokrandt <poker@xxxxxxxxxxxx<mailto:poker@xxxxxxxxxxxx>>

Sent: Wednesday, February 20, 2019 12:07 PM

To: Carissa Klemmer - NOAA Federal

Cc: conduit@xxxxxxxxxxxxxxxx<mailto:conduit@xxxxxxxxxxxxxxxx>;

support-conduit@xxxxxxxxxxxxxxxx<mailto:support-conduit@xxxxxxxxxxxxxxxx>

Subject: Re: [conduit] Large lags on CONDUIT feed - started a week or so ago

Data is flowing again - picked up somewhere in the GEFS. Maybe CONDUIT server

was restarted, or ldm on it? Lags are large (3000s+) but dropping slowly

Pete

<https://nam01.safelinks.protection.outlook.com/?url=http%3A%2F%2Fwww.weather.com%2Ftv%2Fshows%2Fwx-geeks%2Fvideo%2Fthe-incredible-shrinking-cold-pool&data=02%7C01%7Caap1%40psu.edu%7C37d6bff5c67c435eded908d6b870083d%7C7cf48d453ddb4389a9c1c115526eb52e%7C0%7C0%7C636899188649396625&sdata=P2iYFKmQUtrM1jSZDkXP9MTD4h%2BfW%2F4WinfihwAkX48%3D&reserved=0>--

Pete Pokrandt - Systems Programmer

UW-Madison Dept of Atmospheric and Oceanic Sciences

608-262-3086 - poker@xxxxxxxxxxxx<mailto:poker@xxxxxxxxxxxx>

________________________________

From: conduit-bounces@xxxxxxxxxxxxxxxx<mailto:conduit-bounces@xxxxxxxxxxxxxxxx>

<conduit-bounces@xxxxxxxxxxxxxxxx<mailto:conduit-bounces@xxxxxxxxxxxxxxxx>> on

behalf of Pete Pokrandt <poker@xxxxxxxxxxxx<mailto:poker@xxxxxxxxxxxx>>

Sent: Wednesday, February 20, 2019 11:56 AM

To: Carissa Klemmer - NOAA Federal

Cc: conduit@xxxxxxxxxxxxxxxx<mailto:conduit@xxxxxxxxxxxxxxxx>;

support-conduit@xxxxxxxxxxxxxxxx<mailto:support-conduit@xxxxxxxxxxxxxxxx>

Subject: Re: [conduit] Large lags on CONDUIT feed - started a week or so ago

Just a quick follow-up - we started falling far enough behind (3600+ sec) that

we are losing data. We got short files starting at 174h into the GFS run, and

only got (incomplete) data through 207h.

We have now not received any data on CONDUIT since 11:27 AM CST (1727 UTC)

today (Wed Feb 20)

Pete

<https://nam01.safelinks.protection.outlook.com/?url=http%3A%2F%2Fwww.weather.com%2Ftv%2Fshows%2Fwx-geeks%2Fvideo%2Fthe-incredible-shrinking-cold-pool&data=02%7C01%7Caap1%40psu.edu%7C37d6bff5c67c435eded908d6b870083d%7C7cf48d453ddb4389a9c1c115526eb52e%7C0%7C0%7C636899188649396625&sdata=P2iYFKmQUtrM1jSZDkXP9MTD4h%2BfW%2F4WinfihwAkX48%3D&reserved=0>--

Pete Pokrandt - Systems Programmer

UW-Madison Dept of Atmospheric and Oceanic Sciences

608-262-3086 - poker@xxxxxxxxxxxx<mailto:poker@xxxxxxxxxxxx>

________________________________

From: conduit-bounces@xxxxxxxxxxxxxxxx<mailto:conduit-bounces@xxxxxxxxxxxxxxxx>

<conduit-bounces@xxxxxxxxxxxxxxxx<mailto:conduit-bounces@xxxxxxxxxxxxxxxx>> on

behalf of Pete Pokrandt <poker@xxxxxxxxxxxx<mailto:poker@xxxxxxxxxxxx>>

Sent: Wednesday, February 20, 2019 11:28 AM

To: Carissa Klemmer - NOAA Federal

Cc: conduit@xxxxxxxxxxxxxxxx<mailto:conduit@xxxxxxxxxxxxxxxx>;

support-conduit@xxxxxxxxxxxxxxxx<mailto:support-conduit@xxxxxxxxxxxxxxxx>

Subject: [conduit] Large lags on CONDUIT feed - started a week or so ago

Carissa,

We have been feeding CONDUIT using a 5 way split feed direct from

conduit.ncep.noaa.gov<https://nam01.safelinks.protection.outlook.com/?url=http%3A%2F%2Fconduit.ncep.noaa.gov&data=02%7C01%7Caap1%40psu.edu%7C37d6bff5c67c435eded908d6b870083d%7C7cf48d453ddb4389a9c1c115526eb52e%7C0%7C0%7C636899188649406634&sdata=8iDf8JDDLPGHnIjAy1oDbIBweYyGxFYQ2FXsFClfKAA%3D&reserved=0>,

and it had been really good for some time, lags 30-60 seconds or less.

However, the past week or so, we've been seeing some very large lags during

each 6 hour model suite - Unidata is also seeing these - they are also feeding

direct from

conduit.ncep.noaa.gov<https://nam01.safelinks.protection.outlook.com/?url=http%3A%2F%2Fconduit.ncep.noaa.gov&data=02%7C01%7Caap1%40psu.edu%7C37d6bff5c67c435eded908d6b870083d%7C7cf48d453ddb4389a9c1c115526eb52e%7C0%7C0%7C636899188649406634&sdata=8iDf8JDDLPGHnIjAy1oDbIBweYyGxFYQ2FXsFClfKAA%3D&reserved=0>.

http://rtstats.unidata.ucar.edu/cgi-bin/rtstats/iddstats_nc?CONDUIT+idd.aos.wisc.edu<https://nam01.safelinks.protection.outlook.com/?url=http%3A%2F%2Frtstats.unidata.ucar.edu%2Fcgi-bin%2Frtstats%2Fiddstats_nc%3FCONDUIT%2Bidd.aos.wisc.edu&data=02%7C01%7Caap1%40psu.edu%7C37d6bff5c67c435eded908d6b870083d%7C7cf48d453ddb4389a9c1c115526eb52e%7C0%7C0%7C636899188649416644&sdata=EZ4XI9YRYy1NlYZe5f9MyzVYPo9sJ5SHDPBseu1wCtY%3D&reserved=0>

http://rtstats.unidata.ucar.edu/cgi-bin/rtstats/iddstats_nc?CONDUIT+conduit.unidata.ucar.edu<https://nam01.safelinks.protection.outlook.com/?url=http%3A%2F%2Frtstats.unidata.ucar.edu%2Fcgi-bin%2Frtstats%2Fiddstats_nc%3FCONDUIT%2Bconduit.unidata.ucar.edu&data=02%7C01%7Caap1%40psu.edu%7C37d6bff5c67c435eded908d6b870083d%7C7cf48d453ddb4389a9c1c115526eb52e%7C0%7C0%7C636899188649416644&sdata=jywbijCLq%2F9AmtcAkZjZGijrO2se2UByYDDApsrBClM%3D&reserved=0>

Any idea what's going on, or how we can find out?

Thanks!

Pete

<https://nam01.safelinks.protection.outlook.com/?url=http%3A%2F%2Fwww.weather.com%2Ftv%2Fshows%2Fwx-geeks%2Fvideo%2Fthe-incredible-shrinking-cold-pool&data=02%7C01%7Caap1%40psu.edu%7C37d6bff5c67c435eded908d6b870083d%7C7cf48d453ddb4389a9c1c115526eb52e%7C0%7C0%7C636899188649426649&sdata=PENU8d4%2F4BmbbrFFIk8V3GenI9ConMQMLLvvfLBG5BM%3D&reserved=0>--

Pete Pokrandt - Systems Programmer

UW-Madison Dept of Atmospheric and Oceanic Sciences

608-262-3086 - poker@xxxxxxxxxxxx<mailto:poker@xxxxxxxxxxxx>

_______________________________________________

NOTE: All exchanges posted to Unidata maintained email lists are

recorded in the Unidata inquiry tracking system and made publicly

available through the web. Users who post to any of the lists we

maintain are reminded to remove any personal information that they

do not want to be made public.

conduit mailing list

conduit@xxxxxxxxxxxxxxxx<mailto:conduit@xxxxxxxxxxxxxxxx>

For list information or to unsubscribe, visit:

http://www.unidata.ucar.edu/mailing_lists/<https://nam01.safelinks.protection.outlook.com/?url=http%3A%2F%2Fwww.unidata.ucar.edu%2Fmailing_lists%2F&data=02%7C01%7Caap1%40psu.edu%7C37d6bff5c67c435eded908d6b870083d%7C7cf48d453ddb4389a9c1c115526eb52e%7C0%7C0%7C636899188649436658&sdata=fNdJ1aSo%2FekCQt8hQoXgh%2BJTKtp%2FITMeOoZK0j0ogoY%3D&reserved=0>

--

Carissa Klemmer

NCEP Central Operations

IDSB Branch Chief

301-683-3835

_______________________________________________

Ncep.list.pmb-dataflow mailing list

Ncep.list.pmb-dataflow@xxxxxxxxxxxxxxxxxxxx<mailto:Ncep.list.pmb-dataflow@xxxxxxxxxxxxxxxxxxxx>

https://www.lstsrv.ncep.noaa.gov/mailman/listinfo/ncep.list.pmb-dataflow<https://nam01.safelinks.protection.outlook.com/?url=https%3A%2F%2Fwww.lstsrv.ncep.noaa.gov%2Fmailman%2Flistinfo%2Fncep.list.pmb-dataflow&data=02%7C01%7Caap1%40psu.edu%7C37d6bff5c67c435eded908d6b870083d%7C7cf48d453ddb4389a9c1c115526eb52e%7C0%7C0%7C636899188649436658&sdata=jSMFFID0UNtXQjgxQr9JhoGslTdf6SfSe2c%2BELp7%2B0Q%3D&reserved=0>

--

Anne Myckow

Lead Dataflow Analyst

NOAA/NCEP/NCO

301-683-3825

--

Anne Myckow

Lead Dataflow Analyst

NOAA/NCEP/NCO

301-683-3825

_______________________________________________

NOTE: All exchanges posted to Unidata maintained email lists are

recorded in the Unidata inquiry tracking system and made publicly

available through the web. Users who post to any of the lists we

maintain are reminded to remove any personal information that they

do not want to be made public.

conduit mailing list

conduit@xxxxxxxxxxxxxxxx<mailto:conduit@xxxxxxxxxxxxxxxx>

For list information or to unsubscribe, visit:

http://www.unidata.ucar.edu/mailing_lists/<https://nam01.safelinks.protection.outlook.com/?url=http%3A%2F%2Fwww.unidata.ucar.edu%2Fmailing_lists%2F&data=02%7C01%7Caap1%40psu.edu%7C37d6bff5c67c435eded908d6b870083d%7C7cf48d453ddb4389a9c1c115526eb52e%7C0%7C0%7C636899188649446676&sdata=wOFxINdla2KNQLCdCo2NZP8BYr5YS9Bmbiof9n3VNBM%3D&reserved=0>

--

----

Gilbert Sebenste

Consulting Meteorologist

AllisonHouse, LLC